Simulations and modeling take the guesswork out of facility changes by taking ideas for a test drive. Although the methodology of this discipline has been around for a while, recent advancements in computing have allowed it to grow by leaps and bounds. At this point, we are able to try out process alternatives for everything from a single unit operation to an entire manufacturing plant before risking capital expenditures. Doing so allows us to either justify a hypothesis or dismiss an idea early.

By improving the understanding of manufacturing, warehousing and quality control operations, air and fluid flows, material, and personnel movements, computer modeling and simulations support an optimal facility design.

Field of modeling and simulation is extensive and can include several types of modeling techniques. These include discrete event simulations (DES), process modeling, computational fluid dynamics (CFD), building information models (BIM), augmented reality, and virtual reality (AR/VR), for example. This article will focus on use of discrete event simulations for facility design and right-sizing for the biopharmaceutical industry including the general methodology that should be followed to complete a successful simulation study.

Executing a successful simulation study

Simulations allow management to make confident data-driven decisions— often very different ones than were originally under consideration. In order to test out possible solutions, employ this approach:

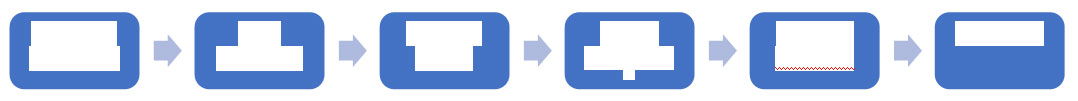

Figure 1: Typical modeling and simulation study methodology

The first step, modeling objectives should be defined. For a facility design effort, usually, the primary objective is to right-size the facility to help satisfy target demands in the most cost-effective manner. Right-sizing involves estimating equipment, personnel, utilities, site logistics (material and personnel movement), and spaces for production, raw material, intermediate and finished goods staging and support functions (e.g., warehousing, quality assurance/quality control, maintenance, administration). These operations and functional areas influence and are influenced by the facility footprint.

Metrics supporting these objectives and potential scenarios to be analyzed need to be identified. Though this step sounds trivial, it is very important and can influence the study duration. Typical metrics for facility design efforts include throughput, equipment and headcount needs, raw material or intermediate staging space in manufacturing, or pallet spaces for warehouse, utility requirement (which influences mechanical, electrical, and plumbing sizing). Typically, scenarios will focus on improving efficiencies, throughput, safety, or justifying certain capital projects, like renovations, upgrades, and expansion projects. Additionally, it focuses on automation efforts and strategy changes

After establishing the objectives and metrics, relevant supporting data must be gathered to develop a baseline model. It is important to note that the model will only be as good as the data inputs used to build it. Data collection efforts can rely on inputs from subject matter experts, laboratory research data or actual operational data from a site. Assumptions must be established in case data is not available, for example, in the case of new technology or when the sample size is too small. To characterize the uncertainties involved, it is highly recommended to use a range of values instead of using average values or point estimates. Whenever possible actual data should be used and fit to statistical distributions to capture the variability influencing operations.

Models developed using the DES technique, a special case of Monte Carlo simulations and time-advance mechanism, are best suited to capture these variabilities and uncertainties, running multiple replications and performing ‘what-if’ analyses. Since the inputs are probabilistic, the outputs will also be stochastic in nature. This allows end-users to make decisions based on their appetite to handle risk.

Recent advancements in computing power and graphics have improved visualization capabilities of these models. Figure 2 shows a screenshot of a DES model. Appropriate animation and visualization help in model verification, and improve communication and stakeholder buy-in. The 3D animations can help better visualize traffic within key corridors, any congestion points, adequacy of intermediate staging spaces, appropriate adjacencies needed, and other factors.

Figure 2: Discrete event simulation (DES) models help characterize uncertainty and variability inherent to the operations, while helping visually communicate the results.

A baseline model should be created with initial set of assumptions or with actual current state operating conditions and constraints. Results from this baseline must be verified and/or validated to ensure the model is behaving as intended. At this step assumptions may be fine-tuned, or additional data may be required to more accurately mimic the process that is being modeled. After completing the verification/validation phase, the model can be used to perform scenario analysis to determine how changing different variables impact the modeled metrics. Sensitivity analysis can also be performed to identify variables or assumptions that influence your design metrics. These verified models can serve as an excellent tool for identifying bottlenecks and key areas of concerns. This information can then be used to develop risk mitigation plans to help manage the uncertainties associated with the design and construction of facilities.

A strategy for long-term success

For facility design problems, simulations should ideally be performed at the concept or even the feasibility stage to determine if the right type and size of facility is being considered. For companies looking to modify existing facilities, this information is also needed to be able to make the right decisions when evaluating options.

Simulation models should be considered living documents. These models can also be viewed as a ‘digital twin’ of your facility. Before making significant changes to a facility or operation within it, simulations can run to determine the impact of the changes and develop strategies to overcome any adverse situations. Once any change is made, it is important to modify the model. The simulation can then be rerun to confirm that the desired result was obtained. Updating the model is essential so that it continues to reflect the current state of the facility and ensures performance and predictive accuracy.

All markets are trying to get more and more from their facilities. Unfortunately, if you skip over the essential steps of truly optimizing your processes, you may face an extremely high normalized cost of operations. Instead, facility owners can look into the future and get their biggest value by incorporating process simulations from the onset. This methodology will build flexibility and sustainability into any facility—a strategy for long-term success.

About the Author

Niranjan Kulkarni, PhD, is the director of operations improvement at CRB. He holds doctorate and master’s degrees in industrial and systems engineering from Binghamton University. He is a certified Lean Six Sigma Master Black Belt. Niranjan has over 15 years of experience in business process and data modeling, operations and process simulations, process improvements, layout optimizations and supply chain management. He has worked with the pharmaceutical, biotech, food, chemical, semiconductor, electronics assembly and packaging, manufacturing and financial industries.

Kulkarni is a frequent speaker at industry eventsand contributor to academic research societies. He has authored over 25 peer reviewed papers published in research journals, conference proceedings and industry periodicals. He is the co-author of the ISPE’s Good Practice Guide on operations management and has also co-authored a book chapter in the prestigious Lecture Notes in Computer Science series.

For more information, visit http://www.crbgroup.com.